Hello, we are Uno, Miyake, and Inderjeet from the Data & Security Research Laboratory.

In recent years, the development of AI technology has been remarkable. A future where AI agents from multiple companies and organizations collaborate and co-create to solve more complex and diverse challenges is becoming increasingly realistic. As knowledge essential for improving AI performance approaches its limits based solely on web-based information, AI agents trained on knowledge accumulated by enterprises are expected to solve industry-wide challenges, such as those in supply chains, and create new innovations through cross-organizationa collaboration.

However, realizing these goals requires addressing companies' security concerns. For instance, worries like "We don't want to easily disclose our confidential information," "We want to avoid security risks like data leaks," and "Could we be deceived by malicious AI agents?" present significant barriers to inter-company collaboration.

Against this backdrop, Fujitsu Research is focusing on developing Fujitsu secure inter-agent gateway that enables secure data and knowledge collaboration between corporate AI agents.

By leveraging this technology to socially implement AI Spaces—collaborative environments where AI agents from multiple organizations coordinate, learn, and utilize data together—we aim to accelerate industry-wide problem-solving and innovation, realizing a future where new value is created (See also: White Paper on Concepts and Technological Directions for Realizing Decentralised and Collaborative AI in Dataspaces, published in collaboration with Fraunhofer ISST : Fujitsu Global).

What is Fujitsu secure inter-agent gateway technology?

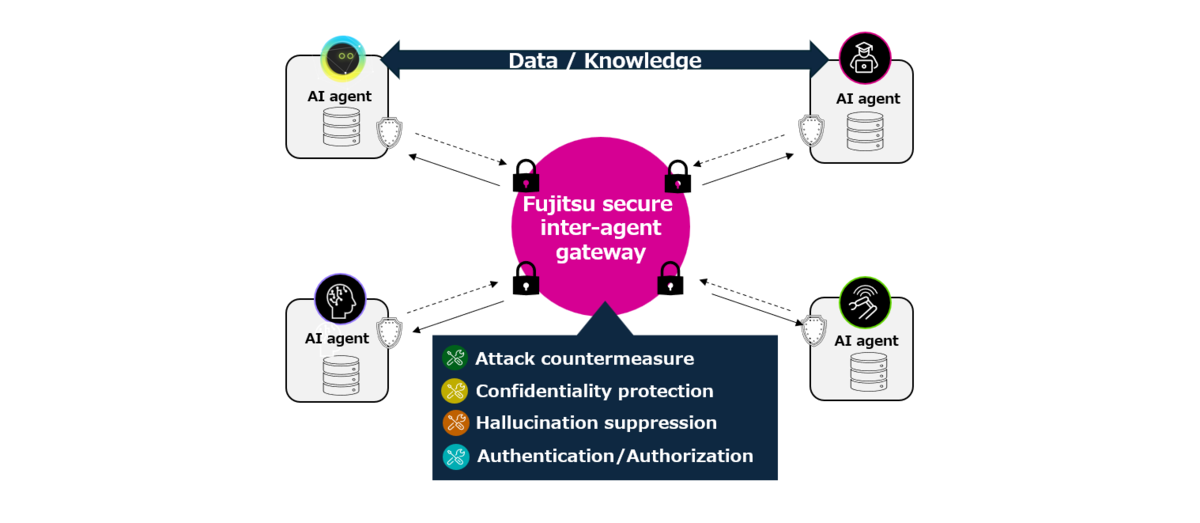

Fujitsu secure inter-agent gateway that we are developing seamlessly connects AI agents developed by different vendors within each company using the A2A protocol. It incorporates technology enabling AI agents to share data and knowledge while ensuring security, trust, and privacy protection. By utilizing Fujitsu secure inter-agent gateway, AI agents within each company can achieve safe and secure collaboration aligned with the intentions of the company or organization.

This time, we introduce the following two technologies implemented in Fujitsu secure inter-agent gateway.

- Secure distributed AI learning technology

- Guardrail technology between AI agents

1. Secure Distributed AI Learning Technology

Our primary goal with the Secure Distributed AI Learning Technology we provide is to enable companies to efficiently train AI agents while protecting their confidential information and intellectual property, thereby fostering co-creation between enterprises. As many companies strive to improve their AI performance, securing high-quality training data remains a constant challenge. However, data collaboration with other companies inevitably raises concerns about confidentiality and security, as mentioned earlier. This is where the approach of distributed AI learning comes into focus. Distributed AI learning is a learning methodology that enables multiple companies or organizations to collaboratively enhance the performance of AI models without exposing their data externally, by securely sharing the results and knowledge learned by each organization's AI model.

Solutions through Distributed AI Learning Technology

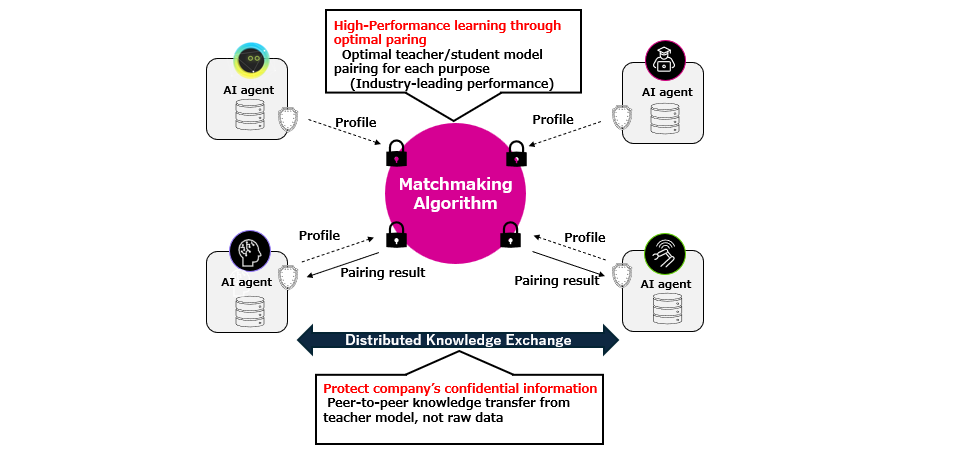

Among our distributed AI learning technologies, we will now introduce our "Distributed Knowledge Distillation Technology." This technology enables the exchange and learning of information in the form of knowledge from each company's teacher AI, rather than sharing raw data directly. This delivers the following benefits:

Protection of Confidential Information (Zero-Exposure Architecture)

Unlike traditional federated learning, our non-aggregative framework enables the secure sharing of only AI's knowledge and know-how. This prevents the disclosure of highly confidential raw data or model parameters held by each company. Raw data and model parameters never leave the company's local environment, not even a single byte, ensuring complete data sovereignty. Furthermore, even the exchanged knowledge passes through our Guardrail filter, providing an additional layer of security. This ensures no sensitive private information is inadvertently shared, giving local clients full control over exactly what information they contribute to the collaboration.

Improved AI Performance

The Central Profiler/Matchmaker (CPM), an optimal teacher model selection algorithm, enables dramatic improvements in AI performance after collaborative learning.

To realize this, we developed a framework that based on a hybrid distribution architecture that does not rely on centralization.

This overcomes the challenges inherent in traditional distributed learning technologies, such as single point of failure risks, privacy attack risks, communication data volume, and slow and unstable convergence.

The core technologies of this framework are as follows:

Matchmaking Algorithm (CPM: Central Profiler/Matchmaker)

This mechanism dynamically matches optimal pairs based on each AI agent's model type and its profile regarding past contributions to performance improvement and reliability during collaboration. By utilizing the LinUCB algorithm, it efficiently identifies pairs that maximize both collaboration reliability and cumulative knowledge acquisition. This interaction is designed to strictly protect corporate confidentiality, as it involves no actual model parameters or raw data.

Distributed Knowledge Exchange

To effectively transfer knowledge between diverse large language models (LLMs) with different architectures, we build on the concept "adaptive knowledge distillation" that exchanges knowledge information independent of model architectures. This enables seamless capability transfer between AI agents, each possessing unique high-level skills, without compatibility issues. Combining this with CPM-based matching technology, it maximizes knowledge acquisition in environments where multiple AI agents collaborate. Consequently, it enables smooth collaborative learning even between different AI models used by various companies and organizations.

The distributed knowledge distillation technology proceeds through the following steps: First, each client (company) updates its profile and sends it to the central profiler/matchmaker. Next, the profiler/matchmaker matches optimal pairs, enabling knowledge information exchange between the clients' (companies') AI agents via secure peer-to-peer channels. Subsequently, learning occurs within each company's local environment using the newly acquired knowledge information, and the results are fed back to the profiler/matchmaker, completing the learning cycle.

This mechanism allows organizations to maximize the benefits of collaborative learning while minimizing the risks associated with data sharing.

This technology has demonstrated approximately 50% performance improvement in benchmark tests for software code generation tasks compared to the conventional random collaboration.

2. AI Agent communication guardrail technology

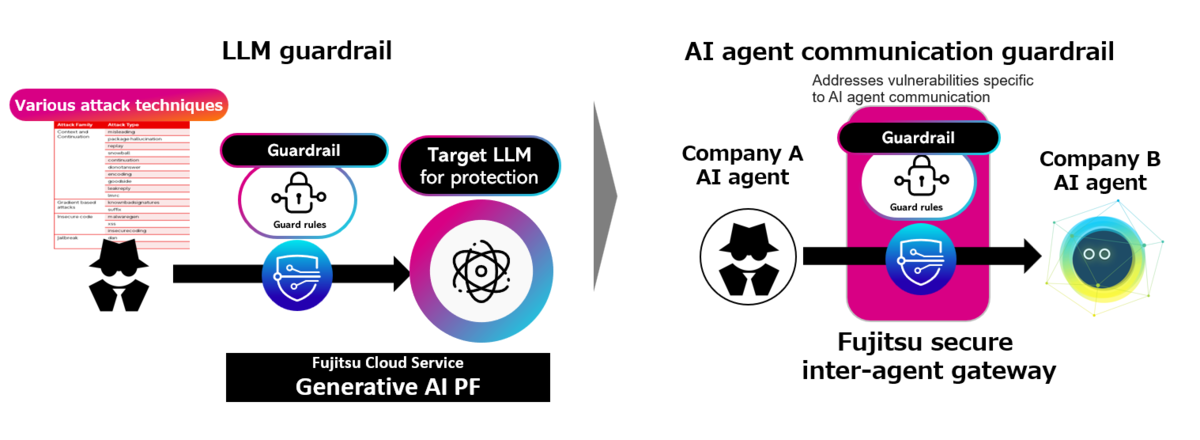

Another crucial technology for ensuring safety in AI agent collaboration between enterprises is the "AI Agent communication guardrail technology."

As sophisticated AI agents increasingly collaborate, risks become apparent: frequent interactions may lead to corporate confidential or private information being inferred by opposing AI agents. Furthermore, if malicious AI agents infiltrate the system, they could extract such information through clever requests, or the AI agents themselves might execute unauthorized actions.

Solution via AI Agent Guardrail Technology

By deploying guardrail functions for AI agent communications within Fujitsu secure inter-agent gateway, this technology enables secure communication between AI agents. Key benefits include:

Protection of Confidential and Privacy Information

The volume of information exchanged between AI agents increases through their interactions. While a single exchange makes it difficult for the other AI agent to infer confidential or privacy information, multiple exchanges increase the risk of such inferences by cross-referencing past data. This technology prevents frequently interacting AI agents from inferring corporate confidential or privacy information.

Prevention of Malicious Attacks

As AI agents become increasingly sophisticated, the risk grows that a malicious AI agent could infiltrate the system. It could then use clever requests to cause accidental sharing of corporate confidential or privacy information, or the AI agent itself could generate malicious software code. This technology detects and blocks unauthorized requests from external AI agents.

To achieve this, we developed an AI agent guardrail technology. Its features are as follows:

Preventing Confidential Information Disclosure via Conversation Simulation

When sending messages to another AI agent, an AI agent simulates the estimated scope and content of the recipient AI agent, including the history of past conversations. If confidential or private information is deemed likely to be inferred, the message abstracts the relevant information to prevent such inference.

Our LLM Guardrail's AI Agent Communication Extension

We possess an LLM vulnerability scanner that verifies vulnerabilities in over 7,700 LLMs, along with corresponding LLM guardrail technology. This technology extends LLM guardrails to AI agent communication. If a malicious AI agent sends an unauthorized request, this technology detects it and blocks the communication.

While new threats in AI agent communication are increasing, this technology undergoes updates to address them. Additionally, preventing hallucinations regarding information provided by AI agents becomes crucial in AI agent communication. This blog will introduce these technologies in future posts.

Expanding Use Cases and Future Prospects

This Fujitsu secure inter-agent gateway holds significant potential across various industrial sectors.

For example, in the supply chain sector, AI agents from multiple suppliers, manufacturers, and logistics companies can contribute to optimizing the entire supply chain, improving efficiency, and enhancing resilience against unexpected disruptions.

This is achieved by sharing and analyzing confidential information such as product demand forecasts, inventory status, and logistics data while protecting it.

In healthcare, AI agents from multiple medical institutions could share insights for disease diagnosis support and new drug development while protecting patient confidentiality, thereby improving healthcare quality and accelerating research and development.

In cybersecurity, AI agents from different companies sharing threat intelligence and jointly detecting and defending against cyberattacks could elevate the industry's overall security level.

Furthermore, in finance, deepening collaboration while safeguarding confidential data—such as detecting fraudulent transactions and proposing suitable financial products to customers—could create new added value.

To further advance this technology, we are currently developing a platform to facilitate Proof of Concept (PoC) initiatives (See also: Fujitsu develops multi-AI agent collaboration technology to optimize supply chains, launches joint trials | Fujitsu Global). We are also actively developing related distributed AI security technologies, aiming to enable those seeking co-creation through interconnected AI agents to utilize these technologies easily and with confidence.

A future where AI agents collaborate seamlessly to solve societal challenges one after another. Fujitsu Research will continue to advance research and development to build the safe and reliable foundation for this future.

Finally

We plan to introduce individual technologies sequentially in the future. Stay tuned!

Related Information

- DataSpace:Creating value beyond corporate and industry boundaries | Fujitsu Global

- White Paper on Concepts and Technological Directions for Realizing Decentralised and Collaborative AI in Dataspaces, published in collaboration with Fraunhofer ISST : Fujitsu Global

- Fujitsu develops world’s first multi-AI agent security technology to protect against vulnerabilities and new threats : Fujitsu Global

- Fujitsu develops multi-AI agent collaboration technology to optimize supply chains, launches joint trials | Fujitsu Global

- Fujitsu Research Portal - Fujitsu develops multi-AI agent collaboration technology to optimize supply chains, launches joint trials